Reflections on AI conversations at Researcher to Reader 2026.

To get things going, my direction of travel right now is through the Channel Tunnel back home to France, after another hugely enjoyable and thought-provoking Researcher to Reader (R2R) conference in London. The event was notable for the announcement that this will be Mark Carden’s last as conference owner and chair, following the acquisition by open science champion Tiberius Ignat’s SKS business based in Germany. The good news is that Tiberius plans a process of evolution rather than revolution for this marquee event, benefiting enormously from Mark’s ongoing involvement in an advisory capacity. R2R is in good hands.

As ever, the threats and opportunities presented by AI dominated the plenary sessions, workshops and conversations at R2R. No surprises that the AI-themed workshop was over-subscribed and, as workshops co-manager with Jayne Marks (Maverick Publishing Consultants), we had to potentially disappoint a few people by asking them to attend their second-choice workshop instead. On behalf of the conference organisers and participants, I would like to echo the thanks I gave during the event to all the workshop facilitators. Planning and delivering these workshops is a huge undertaking by those who volunteer and is a key contributor to the appeal and value of R2R. Initial feedback confirms what we already knew, that all of the workshops – covering a diverse range of topics – were excellent!

There is often a sense, including in the AI discussions we have at scholarly publishing events, that we are somehow passengers being carried along by an unstoppable AI momentum, driven by an oligarchic group of tech leaders being encouraged to abandon ethical guardrails by profit motives and securing patronage from the current U.S. Administration. This month’s METR report showed the rapid (and arguably terrifying) exponential growth in the software development capabilities of various AI models, measured as task-completion time horizons. The media headline on this report is that AI is getting twice as good every seven months.

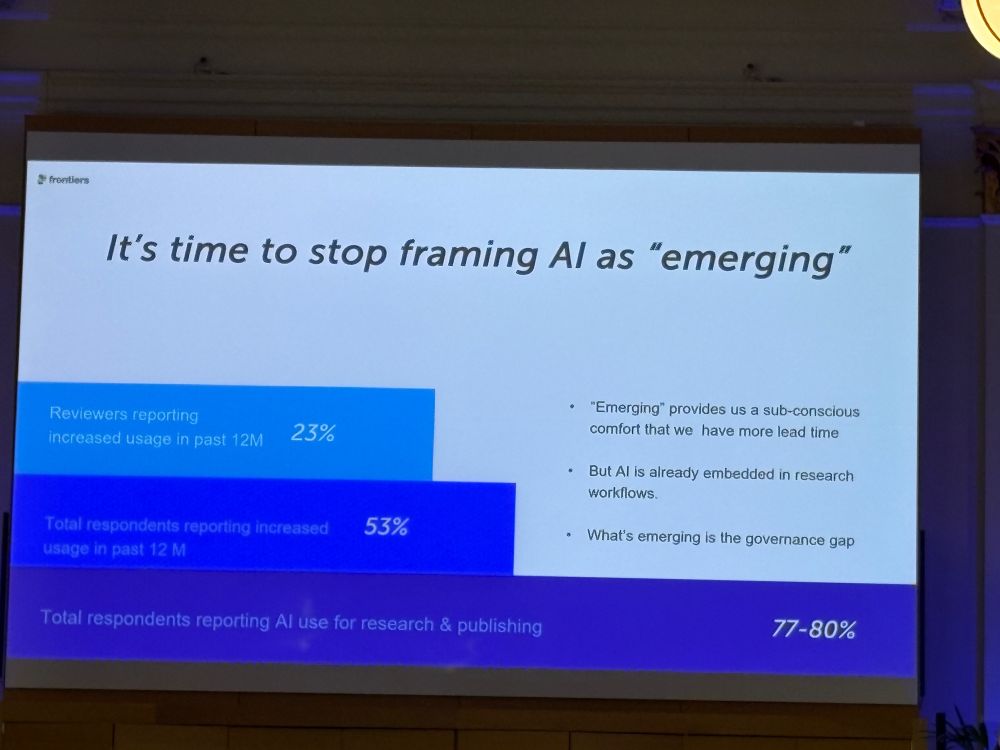

This data from METR points to some important themes which emerged from three excellent plenary talks during R2R. Firstly, as Simone Ragavooloo (Frontiers) urged us to recognise in her presentation on responsible AI adoption, we are a long way past AI being an ‘emerging’ technology to which we still have time to adapt as an industry (Figure 1 is a screenshot from Simone’s presentation). It is already embedded in everyday lives and increasingly in business processes. It is not going to go away.

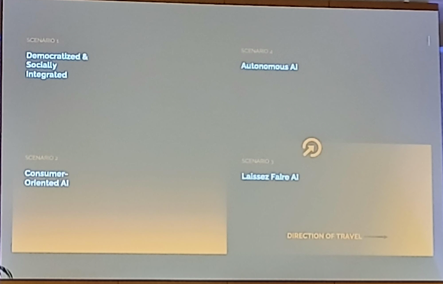

Secondly, the speed of AI progression in an increasingly unregulated commercial environment reinforces the feeling that we are at the mercy of the AI tech bros and their governmental sponsors. As things stand, this does seem like the most likely direction of travel. Keith Webster (Carnegie Mellon University) described four potential futures for AI which he has been tracking over the last decade or so (see Figure 2 from Keith’s presentation).

We like to think that we are headed towards a regulated collaborative environment which Keith describes as ‘democratically and socially integrated’ with humans very much in the AI loop – the thing we all talk about when describing how AI tools should be supporting scholarly publishing. If we can’t reach this scenario in the near-term, perhaps the market will drive AI development and best practice, as a next-best / next-least-worst outcome. As consumers, we will drive the direction of travel. Perhaps.

In Keith’s estimation, the current AI landscape sits somewhere between the scenarios he describes as ‘laissez faire’ and ‘autonomous’, with the direction of travel towards the latter. Unregulated scraping of content coupled with reckless adoption leads to misinformation and disinformation – sound familiar? And the METR report seemingly supports the trend towards autonomous AI, perhaps much sooner than we realise or hope. In this landscape, Keith did offer a ray of hope in that high-quality human peer review will maintain and increase in its value to the research process.

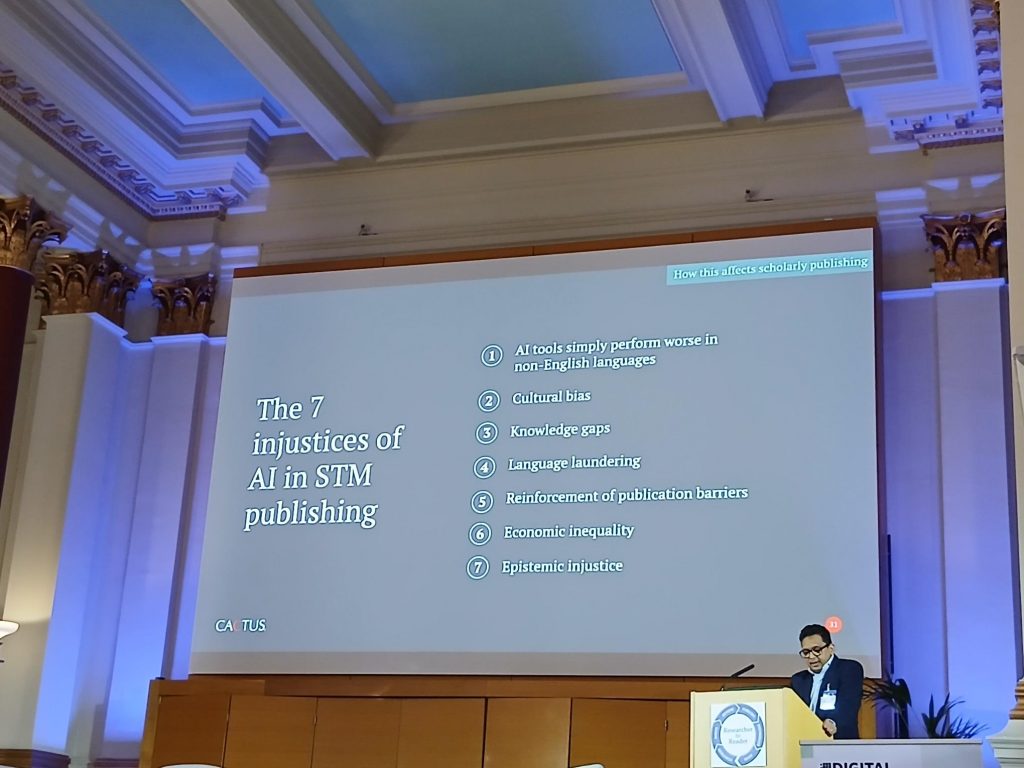

The third plenary talk which really struck home with the audience – myself included – was Nikesh Gosalia (Cactus) asking us to consider whether AI promotes equity or division? AI tools certainly have the potential to help democratise the scholarly publishing process, as demonstrated by the recent proliferation in AI tools designed to support writing, collaboration and to make specific quality checks. Cactus and Kriyadocs (for whom I am Growth Director) are two such technology providers.

Nikesh’s powerful talk highlighted ‘seven injustices of AI in STM publishing’ (Figure 3) and brought us back to the idea that commercial imperatives often drive change. His example of Kodak only agreeing to develop film stock which could adequately represent dark skin tones when chocolate manufacturers and furniture companies complained about the poor quality of their print advertisements, rather than doing so through a sense of ethical and moral responsibility, was shocking. And Nikesh argues that the AI industry is repeating this behaviour today.

During the hugely enteraining R2R debate between Amanda Licastro (Swarthmore College) and Darrell Gunter (Gunter Media Group) as to whether AI tools provide a net benefit to scholarly communication, David Prosser (RLUK) made an excellent observation from the conference floor which neatly summarised where we are as an industry – and arguably as a society – with AI. To paraphrase David’s remarks as accurately as I can recall, we are arguing between a utopian AI future (perhaps Keith’s scenario of a democratised socially integrated AI world) and a dystopian AI present which may already be too late to reverse.

For the record, Amanda moved audience opinion against the motion and won the debate! Which takes us back to how we all feel right now about our direction of travel. The question is… can we collectively grab the steering wheel in time? Or are we already over the cliff, Charlie?

Featured image from The Italian Job (1969)